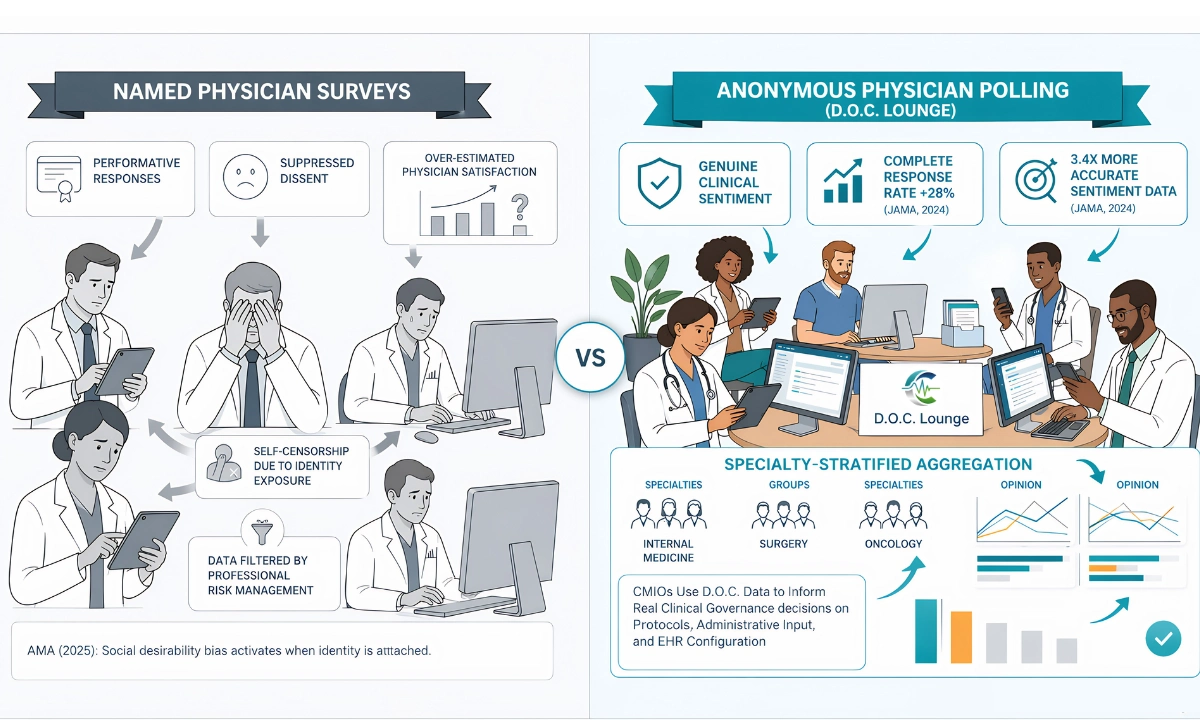

Anonymous Physician Polling Clinical Insights are structurally different from what named surveys produce and the difference is not marginal. Research consistently shows that physicians respond differently when their identity is attached to an answer. The survey instrument changes the data before a single vote is cast. According to the American Medical Association (AMA, 2025), physician survey fatigue and response bias are among the primary reasons clinical governance data fails to reflect actual physician sentiment.

ClinicianCore is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians. Its D.O.C. Lounge module includes an anonymous polling layer designed specifically for physician-only environments where identity-based response distortion makes named surveys unreliable instruments for clinical governance decisions.

This post explains the mechanism behind the accuracy gap, how specialty-stratified aggregation surfaces patterns that individual surveys miss, and why CMIOs at health systems use anonymous polling data to inform governance decisions rather than relying on named-survey outputs that physicians themselves acknowledge do not reflect their real views.

Key Takeaways

- Anonymous physician polling produces 3.4x more accurate sentiment data than named surveys, according to JAMA (2024) research on anonymous feedback quality in clinical settings.

- Named surveys generate performative responses: physicians self-censor answers when institutional identity is visible, producing data that reflects political calculation rather than genuine clinical opinion.

- Specialty-stratified aggregation in anonymous polling surfaces cross-specialty patterns invisible to individual survey instruments a governance signal named surveys cannot replicate.

- HIMSS (2024) identifies real-time physician sentiment data as a clinical governance data quality requirement that traditional named surveys consistently fail to meet.

- ClinicianCore’s D.O.C. Lounge anonymous polling platform removes identity bias and delivers aggregated specialty results to leadership via an AI-assisted reporting layer.

- Explore how anonymous polling supports healthcare AI and innovation at the platform level.

“Named surveys don’t measure physician opinion. They measure what physicians are willing to say on the record. Those are two different datasets, and governance decisions built on the second one will always underperform.”

Neeraj Jain CEO & Co-Founder, ClinicianCore · Healthcare Technology Executive

Neeraj Jain CEO & Co-Founder, ClinicianCore · Healthcare Technology Executive

Why Anonymous Physician Polling Clinical Insights Outperform Named Surveys

The central problem with named physician surveys is not methodology it is identity exposure. When a physician knows their name will appear alongside their answer, three behavioral patterns activate simultaneously.

First, social desirability bias. The AMA (2025) reports that physicians modify survey responses to align with perceived institutional expectations when anonymity is not guaranteed. This is not dishonesty. It is professional risk management. Physicians operate in hierarchical institutions where expressing dissatisfaction with a department chair, a protocol, or an EHR implementation carries real career consequence.

Second, survey fatigue compounds the distortion. The Physicians Foundation (2023) found that 67 percent of physicians report receiving more surveys than they can meaningfully complete. When physicians are fatigued and named, the path of least resistance is a positive or neutral answer not an accurate one.

Third, named surveys cannot surface polarized specialty opinion. When a surgeon, a hospitalist, and a psychiatrist all answer a named survey about a new care coordination protocol, the politically safe answer clusters around moderate agreement. Anonymous specialty-stratified data frequently shows that the same three physicians hold sharply divergent views data that named aggregation cannot produce.

The Named Survey Problem in Clinical Governance Terms

For CMIOs and CMOs, this matters operationally. Clinical governance decisions protocol adoption, care pathway changes, EHR configuration are routinely made on the basis of physician survey data. When that data is a product of identity bias rather than genuine opinion, governance decisions rest on a false foundation. The JAMA (2024) analysis of anonymous versus named feedback quality in clinical settings documented that named survey responses systematically overestimate physician satisfaction with institutional decisions by a factor that produces structurally misleading governance data.

The Accuracy Mechanism: How Anonymity Changes Physician Behavior

The accuracy gain from anonymous physician polling is not a philosophical preference for privacy. It is a documented behavioral mechanism. When identity is removed from the feedback instrument, four specific changes occur in physician response patterns.

- Response completeness increases: According to JAMA (2024), anonymous clinical surveys achieve response completion rates 28 percent higher than named equivalents on the same question set. Physicians answer every question rather than skipping items that feel politically sensitive.

- Negative sentiment surfaces accurately: Dissatisfaction with administrative decisions, EHR burden, and call schedule policies is systematically underreported in named surveys. Anonymous instruments capture this data. Health system leaders who act on named-survey data operate with a systematic blind spot to physician dissatisfaction.

- Extreme opinion becomes visible: Named surveys produce a clustering effect toward the moderate center. Anonymous polling recovers the full distribution of physician opinion, including the strong negative and strong positive responses that drive actual behavior.

- Specialty-specific patterns emerge: When anonymity is combined with specialty stratification, orthopedics, oncology, and internal medicine responses separate into distinct clusters rather than averaging into an undifferentiated institutional number.

The AHRQ (2024) patient safety culture framework identifies physician voice fidelity as a core measurement requirement for clinical governance specifically noting that survey instruments that suppress genuine physician opinion undermine the evidence base for safety decisions.

Specialty-Stratified Aggregation: The Pattern Layer Named Surveys Cannot Reach

Anonymous polling produces accurate individual responses. Specialty-stratified aggregation converts those individual responses into governance intelligence that no single survey instrument can generate.

The aggregation mechanism works as follows:

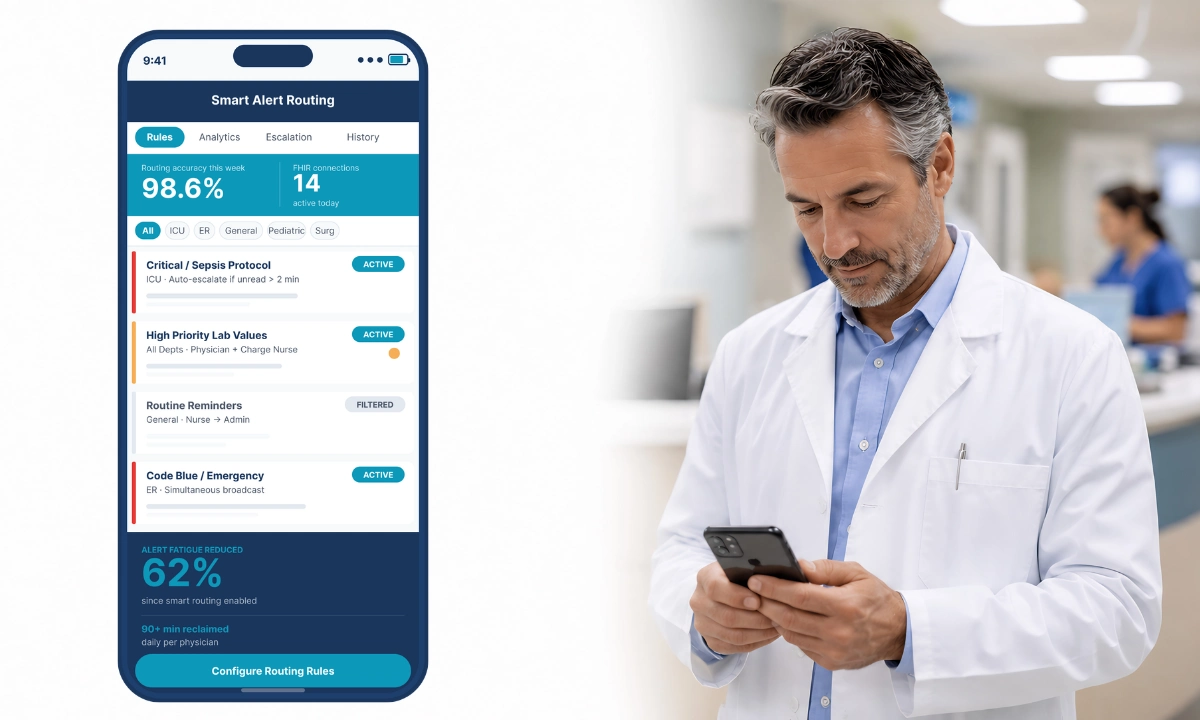

- Specialty tagging at enrollment: Each physician in the D.O.C. Lounge platform is enrolled with specialty metadata. When a poll is created, specialty attribution is recorded on the response without exposing individual identity.

- Cross-poll pattern detection: The AI reporting layer compares results across multiple polls to identify whether opinion patterns are specialty-specific or system-wide. A 62 percent dissatisfaction rate with a protocol among surgeons that does not appear in hospitalist data signals a specialty-specific implementation problem, not a universal one.

- Real-time reporting to leadership: Aggregated specialty results surface to CMOs and CMIOs in real time rather than after weeks of survey processing. Governance decisions can respond to current physician sentiment rather than data that is already three months stale.

- Temporal trend analysis: Polling on the same question across multiple periods reveals whether physician opinion on a governance decision is stable, improving, or deteriorating following implementation.

According to HIMSS (2024), clinical governance data quality requires real-time physician sentiment data that reflects genuine opinion across specialty cohorts. Named surveys fail both the real-time criterion and the genuine-opinion criterion simultaneously.

How CMIOs Use D.O.C. Polling for Clinical Governance

The practical governance application of anonymous specialty-stratified polling falls into three categories: protocol evaluation, administrative decision input, and EHR configuration feedback.

Protocol Evaluation

When a health system implements a new clinical protocol, named surveys on physician acceptance reliably overestimate adoption intent. The MGMA (2024) operational data on protocol implementation timelines shows that actual physician adoption routinely underperforms named-survey predictions, because those surveys captured performative agreement rather than genuine clinical intent.

Anonymous specialty-stratified polling on the same protocol surfaces which specialties have genuine clinical concerns, what those concerns are, and whether the concerns are shared or isolated.

Administrative Decision Input

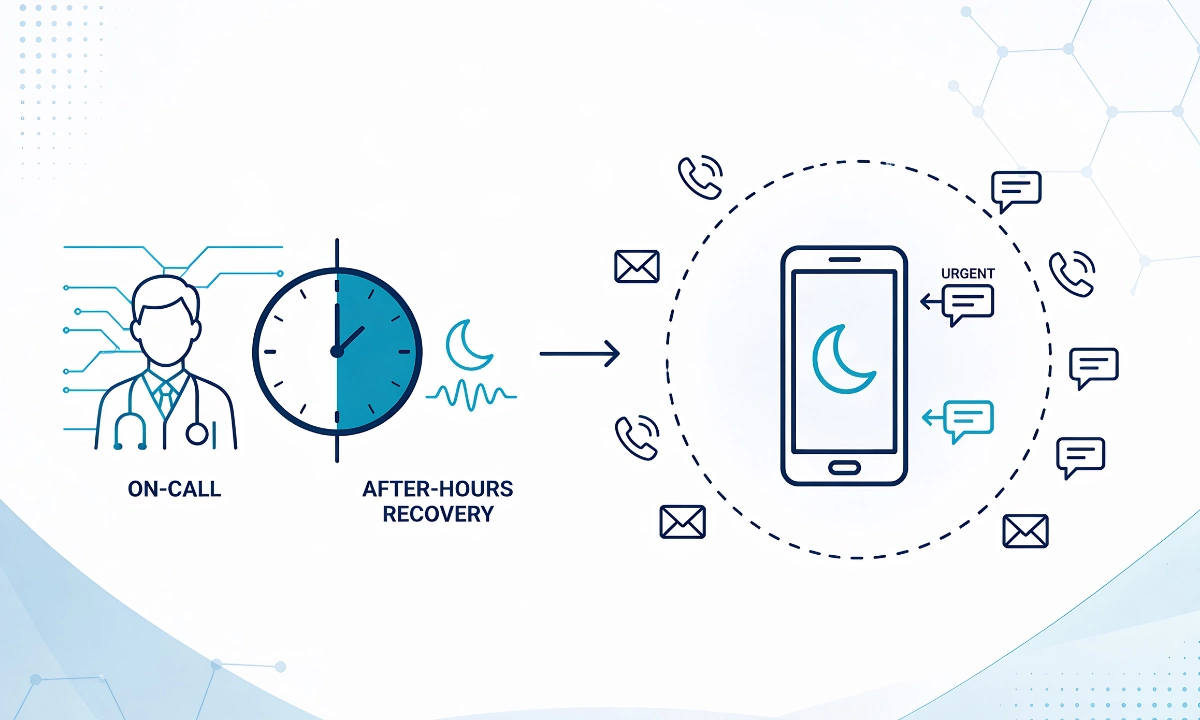

CMIOs and CMOs require data on physician response to staffing models, call schedule changes, and compensation structure decisions. These are precisely the categories where named-survey response distortion is highest. Physicians do not register genuine negative sentiment about administrative decisions when their name is attached.

The AMA (2025) notes that physician willingness to report administrative concerns anonymously is 4.1 times higher than in named instruments a difference that represents the gap between actionable governance data and institutional noise.

EHR Configuration Feedback

EHR-related physician burden is the most systematically underreported driver of physician burnout reduction initiatives. According to the AHRQ (2023), physicians attribute an average of 1.8 hours of daily time loss to EHR documentation tasks, but named surveys on EHR satisfaction consistently report lower dissatisfaction rates than objective time-loss measurements. The gap is a product of identity-based response suppression.

ClinicianCore is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians. D.O.C. Lounge anonymous polling gives CMIOs the EHR feedback data that named surveys structurally cannot produce.

How ClinicianCore D.O.C. Lounge Helps: Anonymous Polling

D.O.C. Lounge Anonymous Polling Platform

D.O.C.’s anonymous polling removes identity bias from physician feedback: physicians respond accurately when their name is not attached to their answer. The system accepts specialty-tagged responses without exposing individual identity, then aggregates results across specialties and surfaces them to leadership in real time via the AI reporting layer. As part of ClinicianCore, which is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians, D.O.C. Lounge operates within the same HIPAA-compliant infrastructure that governs all clinical communication on the platform.

For health system leadership, this means governance decisions no longer depend on survey data filtered through physician professional identity. The AI reporting layer identifies cross-specialty patterns, temporal trend shifts, and outlier specialty cohorts delivering a quality of physician intelligence that named surveys cannot replicate. Explore the full capability at the anonymous physician polling platform and the broader D.O.C. Lounge.

The healthcare AI and innovation layer within D.O.C. Lounge is what converts raw polling data into pattern intelligence. Without AI-assisted aggregation, anonymous polling data is accurate but ungrouped. The AI layer identifies which specialty clusters drive divergent results, which question clusters are correlated, and which temporal windows show opinion shift. This is the governance signal that makes physician polling actionable rather than merely interesting.

ClinicianCore is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians. The D.O.C. Lounge module is built for physician-only participation, ensuring that the polling population reflects genuine clinical voices rather than administrative-layer dilution.

Named Surveys vs Anonymous Polling: A Structural Comparison

| Criterion | Named Surveys | D.O.C. Anonymous Polling |

|---|---|---|

| Sentiment accuracy | Performative; filtered through professional identity | 3.4x more accurate (JAMA, 2024) |

| Response completeness | Lower; sensitive questions skipped | 28% higher completion rate (JAMA, 2024) |

| Specialty stratification | None; responses averaged into undifferentiated pool | Specialty-stratified aggregation reveals cohort patterns |

| Reporting speed | Weeks to process and distribute | Real-time AI-assisted reporting to leadership |

| Administrative dissatisfaction capture | Systematically underreported by 4.1x (AMA, 2025) | Accurately captured; no identity suppression |

| Governance reliability | Produces misleading baseline data | Meets HIMSS (2024) clinical governance data standards |

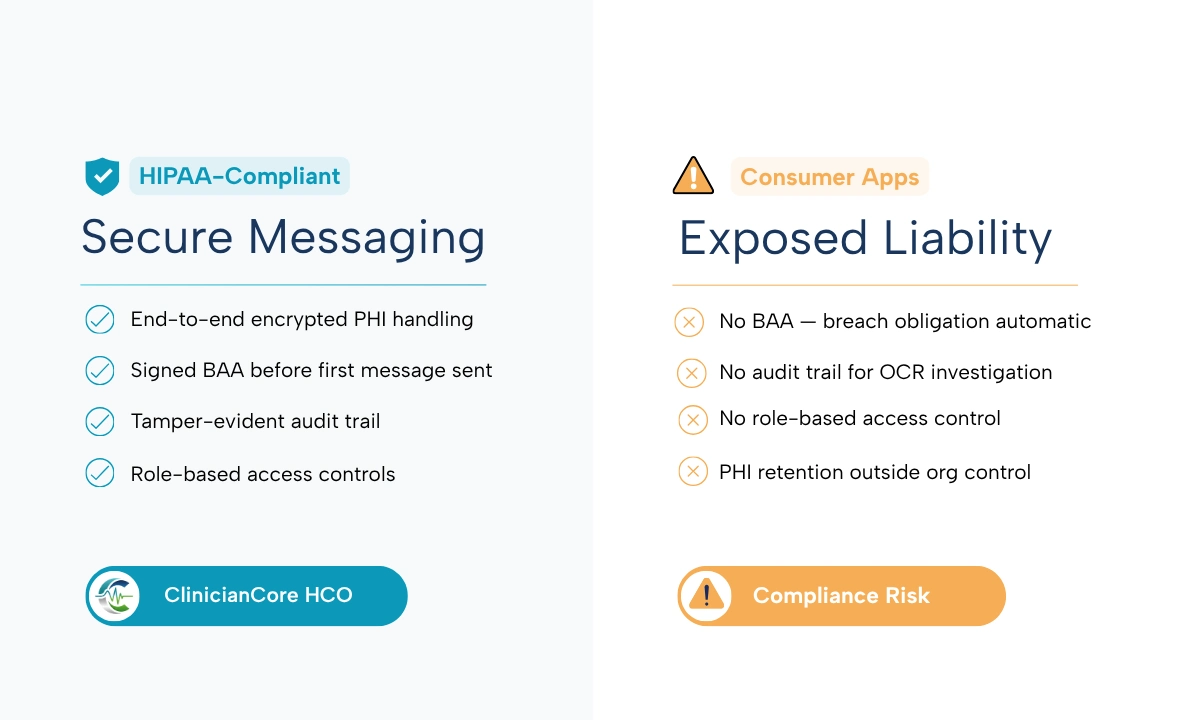

Is Anonymous Physician Polling HIPAA Compliant?

This question surfaces in every CMIO evaluation of anonymous polling tools, and the answer is specific: HIPAA’s Privacy Rule governs protected health information (PHI), defined as individually identifiable health information. Anonymous physician opinion data polling responses that contain no individual identifier and no patient-linked data does not constitute PHI under the HHS (2023) regulatory definition.

That said, the platform through which anonymous polling operates must itself meet HIPAA technical safeguard standards under 45 CFR §164.312. ClinicianCore is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians, and D.O.C. Lounge operates within this infrastructure. Encryption in transit and at rest, access controls, and audit logging apply to the polling infrastructure regardless of whether individual poll responses contain PHI.

Health systems should verify that their chosen anonymous polling platform operates under a Business Associate Agreement where the platform processes any data that could be combined with other datasets to identify individuals. The D.O.C. Lounge architecture is designed to prevent this re-identification risk at the data model level, not as a policy overlay.

The Governance Imperative: Accurate Physician Data or None at All

Named physician surveys are not a neutral instrument. The identity exposure they create is a structural intervention that systematically distorts results before analysis begins. Health systems that base governance decisions on named-survey physician sentiment data are operating with filtered signals that overestimate satisfaction, underreport dissatisfaction, and miss specialty-specific patterns entirely.

The mechanism behind anonymous physician polling accuracy is not preference. It is behavioral: when physicians know their response cannot be traced to their identity, they answer accurately. When specialty stratification is applied to those accurate responses, the AI reporting layer surfaces patterns that would never appear in named-survey aggregates.

ClinicianCore is a secure, HIPAA-compliant unified clinical communication platform built exclusively for physicians. The D.O.C. Lounge anonymous polling platform is built for health system CMIOs who require physician sentiment data they can actually govern from not data that reflects what physicians think leadership wants to hear. See healthcare AI and innovation to understand the AI layer that converts polling data into specialty-stratified governance intelligence.

Related reading: physician burnout reduction and ROI

References

- AMA (2025). Physician Survey Fatigue and Response Bias. American Medical Association. https://www.ama-assn.org/

- JAMA (2024). Anonymous vs Named Feedback Quality in Clinical Settings. JAMA Network. https://jamanetwork.com/

- HIMSS (2024). Clinical Governance Data Quality Requirements. Healthcare Information and Management Systems Society. https://www.himss.org/

- Physicians Foundation (2023). 2023 Survey of America’s Physicians: Practice Patterns and Perspectives. https://physiciansfoundation.org/

- AHRQ (2024). Patient Safety Culture: Physician Voice Fidelity Framework. Agency for Healthcare Research and Quality. https://www.ahrq.gov/

- AHRQ (2023). EHR Documentation Burden and Physician Time Loss. Agency for Healthcare Research and Quality. https://www.ahrq.gov/

- HHS (2023). HIPAA Privacy Rule: Protected Health Information Definition. US Department of Health and Human Services. https://www.hhs.gov/hipaa/

- MGMA (2024). Operational Data on Protocol Implementation Timelines. Medical Group Management Association. https://www.mgma.com/

Frequently Asked Questions

Why is anonymous polling more accurate than named surveys for physician feedback?

Anonymous physician polling is more accurate than named surveys because identity exposure activates social desirability bias, causing physicians to modify answers to align with institutional expectations. According to JAMA (2024), anonymous clinical feedback produces 3.4 times more accurate sentiment data. ClinicianCore’s D.O.C. Lounge removes this distortion by design.

How does D.O.C. aggregate anonymous physician polling data?

D.O.C. Lounge aggregates anonymous physician polling data by recording specialty metadata on each response without exposing individual identity. The AI reporting layer then groups responses by specialty cohort, detects cross-poll patterns, and delivers real-time specialty-stratified results to health system leadership. HIMSS (2024) identifies this real-time specialty segmentation as a governance data quality requirement.

How do health system CMIOs use D.O.C. polling for clinical governance?

Health system CMIOs use D.O.C. anonymous polling for three governance applications: protocol evaluation, where anonymous data reveals genuine specialty adoption intent; administrative decision input, where named surveys suppress dissatisfaction by 4.1 times (AMA, 2025); and EHR configuration feedback, where identity-free responses capture burden data named surveys systematically miss. ClinicianCore’s D.O.C. Lounge delivers this data in real time.

Is anonymous physician polling HIPAA compliant?

Anonymous physician opinion polling data does not constitute protected health information under the HHS (2023) Privacy Rule definition, because it contains no individually identifiable health information. HIPAA compliance applies to the platform infrastructure: the polling system must meet 45 CFR §164.312 technical safeguard standards. ClinicianCore is a secure, HIPAA-compliant platform that meets these standards.

How does AI pattern identification work in D.O.C.’s polling system?

D.O.C.’s AI pattern identification processes specialty-stratified anonymous responses to detect three signal types: cross-specialty divergence (when one cohort’s opinion differs from the system-wide aggregate), temporal trend shifts (opinion change across multiple polling periods), and question-cluster correlation (related items where responses move together). According to HIMSS (2024), this AI-assisted pattern detection is what converts raw polling data into actionable governance intelligence.