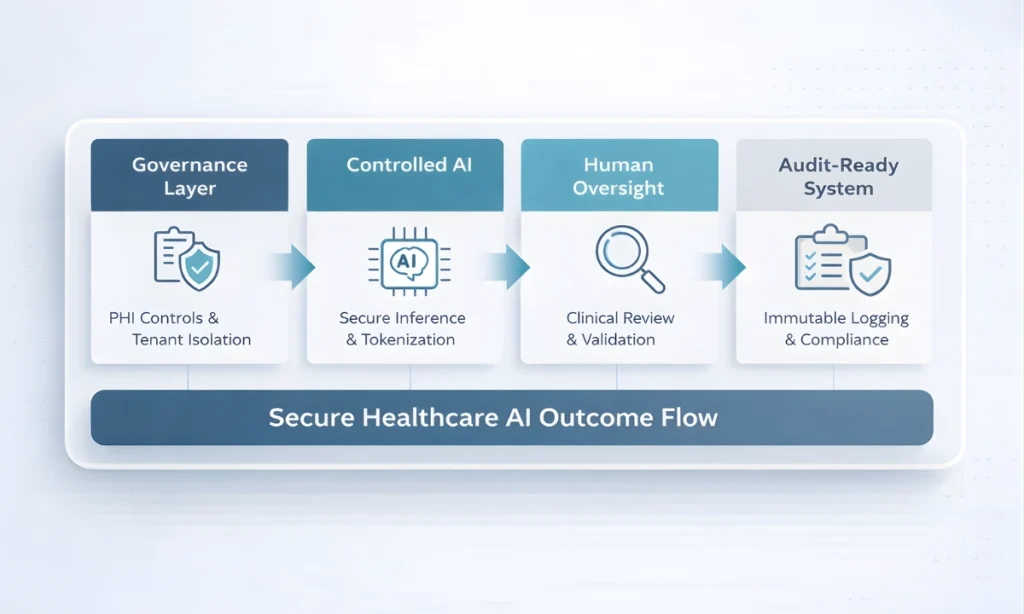

HIPAA-Compliant Multi-Tenant Healthcare AI Systems require a rigorously structured engineering framework that integrates artificial intelligence into clinical and administrative workflows while guaranteeing continuous protection, encryption, and logical isolation of Protected Health Information (PHI).

It enforces regulatory compliance through automated audit logging, deterministic data routing, tokenized PHI handling, and programmable governance controls within multi-tenant SaaS environments.

Executive Summary: HIPAA-Compliant Healthcare AI Architecture for CIOs

The integration of artificial intelligence into enterprise healthcare is no longer experimental; it is infrastructural. However, deploying AI in regulated environments requires more than API integration. It demands enforceable data governance, strict multi-tenant isolation, and embedded compliance controls.

In healthcare, clinical fidelity and security architecture are inseparable. AI systems must operate within deterministic safeguards that prevent PHI leakage, cross-tenant exposure, and probabilistic model drift. Compliance cannot be validated after deployment; it must be embedded directly into middleware, CI/CD pipelines, and audit infrastructure.

While basic AI adoption may yield 20–35% productivity gains, governance-controlled architectures deliver 50–60% efficiency improvements without amplifying regulatory risk. For healthcare CIOs and enterprise architects, the mandate is clear: scalable AI requires mathematically enforceable infrastructure, not trust in model behavior alone.

Key Takeaways

The following table summarizes the non-negotiable architectural pillars required for deploying HIPAA-compliant healthcare AI at enterprise scale:

| Domain | Enterprise Requirement | Why It Matters |

|---|---|---|

| AI Governance | Programmable rule enforcement in CI/CD pipelines | Prevents architectural drift and security vulnerabilities |

| PHI Protection | Tokenization, DLP layers, and zero-retention contracts | Eliminates data leakage risk to external LLM providers |

| Multi-Tenancy | Row-Level Security (RLS) and tenant-aware routing | Prevents cross-tenant data exposure in SaaS environments |

| Hallucination Control | Retrieval-Augmented Generation (RAG) with deterministic fallback | Reduces fabricated outputs in clinical contexts |

| Auditability | Immutable AI interaction ledgers | Ensures forensic traceability and HIPAA audit readiness |

| Human Oversight | Mandatory human-in-the-loop validation workflows | Prevents cognitive offloading and preserves clinical accountability |

| Infrastructure Design | Compliance embedded into middleware and system architecture | Makes regulatory adherence mathematically enforceable |

Designing HIPAA-Compliant Multi-Tenant Healthcare AI Systems

Designing HIPAA-Compliant Multi-Tenant Healthcare AI Systems requires aligning artificial intelligence capabilities with enforceable security architecture from the outset. Unlike basic AI integrations, these systems must embed tenant isolation, PHI tokenization, deterministic audit logging, and governance enforcement directly into the infrastructure layer. The design objective is not merely model performance, but mathematically enforceable compliance within shared SaaS environments.

At enterprise scale, HIPAA-Compliant Multi-Tenant Healthcare AI Systems must treat regulatory adherence as an architectural constraint, not a post-deployment validation exercise. Every data pathway, model interaction, and middleware layer must operate within clearly defined isolation boundaries that prevent cross-tenant exposure and uncontrolled probabilistic behavior.

The Myth of Autonomous AI in Healthcare Development

The prevailing narrative surrounding artificial intelligence frequently overstates the autonomy and reliability of Large Language Models (LLMs). In enterprise healthcare settings, treating an LLM as an autonomous agent is a fundamental architectural error. LLMs are probabilistic engines; they generate responses based on statistical likelihoods rather than deterministic logic. In clinical environments, probabilistic outputs without stringent, deterministic guardrails inevitably lead to architectural drift and systemic risk.

LLM healthcare development requires an acknowledgment that models possess no inherent understanding of the HIPAA Security Rule guidelines or clinical accuracy.

When developers allow models to operate without tightly constrained parameters, systems become vulnerable to prompt injection, data bleeding across tenant boundaries, and the generation of syntactically correct but clinically invalid code or patient summaries. To utilize these models safely, enterprise engineers must relegate AI to the role of an advanced augmentation tool—one that operates strictly within predefined, heavily monitored execution environments. By discarding the myth of autonomy, organizations can focus on building robust middleware layers that intercept, validate, and sanitize all AI inputs and outputs before they interact with primary clinical databases.

The Foundation Layer — AI Governance in HIPAA-Compliant Healthcare AI Architecture

Establishing a sustainable healthcare AI compliance framework requires formalizing AI governance at the foundational layer of the software development lifecycle.

AI governance in healthcare is not a theoretical policy; it is a set of programmable rules enforced through code. A mature AI governance in healthcare framework formalizes these controls into enforceable architectural standards.

Coding Standards and Deterministic Testing

AI-assisted code generation must be subjected to static application security testing (SAST) and dynamic application security testing (DAST) pipelines.

Engineers must deploy automated linters and security scanners to evaluate all AI-generated logic against secure coding standards such as the OWASP Top 10 security standards before being merged into production branches.

When governance frameworks are embedded directly into CI/CD pipelines, organizations frequently observe 50–60% efficiency improvements in scaffolding, testing automation, and compliance middleware generation. These gains are not the result of autonomous AI intelligence, but of deterministic constraint layering that reduces rework and eliminates structural ambiguity.

Encryption Mandates and PHI Handling Rules

HIPAA-compliant healthcare AI architecture requires that no PHI is ever processed by an external, multi-tenant AI endpoint without prior de-identification. Architecture teams must implement data loss prevention (DLP) APIs and internal Named Entity Recognition (NER) models to intercept payloads. This sanitization layer scrubs explicit patient identifiers, replacing them with secure tokens before the payload reaches the LLM. Once the model returns the output, the middleware reverses the tokenization, restoring context locally within the secure perimeter.

Where external LLM providers are utilized, enterprise contracts must enforce zero data retention guarantees, audit-verifiable processing agreements, and regional data residency controls. Compliance is not achieved through policy statements alone but through enforceable technical and contractual safeguards.

Retrieval-Augmented Guardrails

To constrain model hallucinations, enterprise architectures utilize Retrieval-Augmented Generation (RAG). By grounding the LLM’s responses strictly in verified, proprietary clinical corpuses or approved compliance manuals, engineers prevent the model from fabricating information. Compliance-by-design principles dictate that if a query cannot be satisfied by the retrieval index, the system must trigger a deterministic fallback rather than allowing the model to guess.

Architecting Multi-Tenant Healthcare AI Systems: Isolation as a Security Imperative

SaaS scalability depends on multi-tenancy, but in healthcare, improper multi-tenancy is a critical liability.

Designing a secure multi-tenant healthcare infrastructure requires absolute certainty that one hospital system’s data (Tenant A) cannot influence or be accessed by another (Tenant B), particularly when both tenants share the same underlying LLM infrastructure.

Logical Isolation and Row-Level Security (RLS)

While physically segregating databases for every tenant is cost-prohibitive, logical isolation must be ironclad. Engineers must implement Row-Level Security (RLS) at the database tier (e.g., PostgreSQL). When an API request is made, a secure JSON Web Token (JWT) passes the specific tenant_id. The database engine natively enforces policies that restrict query execution solely to rows matching that tenant_id, ensuring the AI application cannot inadvertently retrieve cross-tenant records.

Tenant-Aware Routing Middleware

A HIPAA-compliant healthcare AI architecture requires tenant-aware routing. As prompts are constructed, the middleware must inject tenant-specific context and boundaries. For instance, customized AI system prompts should dynamically load the specific clinical protocols of the authenticated tenant, ensuring that the model’s behavior aligns with local governance without exposing the protocols of other tenants.

Shared Infrastructure vs. Segregated Data

Enterprises must strike a balance between shared computational resources (for cost efficiency) and segregated storage. While the AI inference engine may be shared, the vector databases utilized for RAG, the audit logs, and the secure tokenization vaults must remain logically partitioned.

HIPAA Compliance as Embedded Infrastructure

Compliance cannot be an afterthought or a manual checklist applied prior to deployment. In mature HIPAA-compliant communication systems, regulatory adherence is codified directly into infrastructure as a persistent, automated background process.

Automated Audit Logging

Every interaction with the AI model must be logged in an immutable, append-only ledger. This includes the sanitized prompt, the model’s output, the user identifier, the timestamp, and the specific version of the model queried. This automated audit logging provides a verifiable chain of custody essential for HIPAA compliance audits and post-incident forensic analysis.

Encryption Protocols

All PHI secure AI systems must enforce AES-256 encryption at rest and TLS 1.2 or higher for data in transit. Service-to-service communication within the microservices cluster (e.g., between the application backend and the vector database) must utilize mutual TLS (mTLS) to prevent internal lateral movement in the event of a breach.

Compliance Middleware Generation

Architects should deploy compliance middleware that acts as a proxy between the user application and the AI models. This middleware is responsible for real-time policy enforcement, such as rate-limiting suspicious query patterns that may indicate a data exfiltration attempt, and validating that required consent flags are present before processing clinical summarizations.

Human-in-the-Loop Oversight — Preventing Cognitive Offloading

The introduction of highly capable LLMs introduces the psychological risk of cognitive offloading—the tendency for human operators to blindly trust automated outputs, reducing their own critical oversight. To counter this, human-in-the-loop AI oversight must be structurally mandated by the software.

Senior Review Mechanisms

Within development workflows, all AI-generated code must undergo mandatory peer review by senior engineers. The pull request infrastructure should automatically flag commits heavily authored by AI tools, routing them to specialized architectural review boards to verify structural integrity and security compliance.

Clinical Validation Workflows

In clinical applications, the UI/UX must force deliberate human interaction. AI-generated diagnostic summaries or draft responses to patient portals must never be auto-sent. The interface must require the clinician to actively review, modify, and cryptographically sign off on the AI’s draft, ensuring the provider retains ultimate medical and legal responsibility.

Architectural Integrity Checks

System reliability engineering (SRE) teams must conduct routine “red team” exercises against the AI infrastructure, intentionally attempting to bypass PHI filters or trigger cross-tenant data leaks. These continuous security review processes ensure the guardrails function deterministically in production environments.

Quantifying Enterprise Impact

Deploying a rigorous, governance-first HIPAA-compliant healthcare AI architecture yields measurable improvements in both operational efficiency and risk reduction. While the initial engineering overhead is higher, the long-term enterprise impact is substantially positive. By formalizing guardrails, organizations empower their developers and clinicians to utilize AI tools at maximum velocity without fear of regulatory breaches.

Governance does not slow down innovation; it provides the structural stability required for rapid, scalable output.

Beyond velocity, governance materially improves implementation accuracy. In unregulated AI-assisted development environments, first-pass success rates for complex features often hover around 30%, requiring significant post-generation debugging and architectural correction.

Under governance-controlled development pipelines, organizations report first-pass implementation accuracy exceeding 70%, as deterministic constraints eliminate hallucinated logic and structural drift before code reaches production review.

Organizations implementing HIPAA-Compliant Multi-Tenant Healthcare AI Systems consistently report stronger audit readiness and reduced cross-tenant exposure risk.

Operational Impact Under Governance-Controlled AI Architecture

| Domain | Unregulated AI Usage | Governance-Controlled AI Architecture |

|---|---|---|

| Development Velocity | ~3x acceleration with elevated defect risk | ~2.5x acceleration under controlled, review-driven conditions |

| First-Pass Success Rate | ~30% for complex workflows | 70–75% under deterministic constraints |

| Multi-Tenant Isolation Errors | Elevated risk of cross-tenant leakage | Deterministically prevented via RLS and tenant-aware middleware |

| HIPAA Compliance Enforcement | Manual validation and checklist audits | Automated logging, tokenization, and middleware enforcement |

| Documentation & Testing Automation | 30–50% efficiency gains | 70–85% efficiency under structured specifications |

Enterprise HIPAA-Compliant Healthcare AI Architecture vs Basic AI Adoption

To fully understand the infrastructural requirements, technical leaders must distinguish between superficial AI adoption and true enterprise architecture.

| Feature Layer | Basic API Wrapper Adoption | Enterprise HIPAA-Compliant AI Architecture |

| Data Flow | Direct API calls to external LLM | Intercepted by middleware, scrubbed by NER, tokenized |

| Tenancy Model | Shared database schema, app-level filtering | Strict Row-Level Security (RLS), isolated vector stores |

| Hallucination Control | Relying on base model logic | Retrieval-Augmented Generation (RAG) with clinical corpuses |

| Oversight | End-user discretion | Enforced UI friction, mandatory human-in-the-loop sign-off |

| Auditing | Standard application error logs | Immutable AI interaction ledgers, token-mapped tracing |

Conclusion — Designing AI Systems That Deserve Clinical Trust

In enterprise healthcare environments, compliance cannot be aspirational. It must be mathematically enforceable through infrastructure, policy engines, and deterministic system design.

The deployment of generative models in healthcare is not merely a software engineering challenge; it is a profound responsibility. A true HIPAA-compliant healthcare AI architecture requires moving beyond the hype of basic API wrappers and committing to the hard work of building deterministic, resilient, and audit-ready AI infrastructure. By enforcing multi-tenant isolation, embedding compliance into the data pipeline, and mandating human oversight, organizations can secure their platforms against probabilistic failures. Ultimately, enterprise healthcare systems will only adopt AI at scale when the architecture itself mathematically guarantees the protection of patient data.

Designing these systems with an uncompromising focus on governance ensures the delivery of platforms that earn and deserve clinical trust.

Organizations pursuing a broader enterprise healthcare AI strategy must anchor innovation in governance-first infrastructure.

The long-term viability of HIPAA-Compliant Multi-Tenant Healthcare AI Systems depends on governance-first infrastructure design rather than model capability alone.

Frequently Asked Questions

What is HIPAA-compliant healthcare AI architecture?

HIPAA-compliant healthcare AI architecture is a structured engineering framework that integrates artificial intelligence while strictly protecting patient data.

Why is multi-tenant isolation critical in healthcare AI?

Multi-tenant isolation prevents the data of one healthcare organization from being accessed or influenced by another organization sharing the same software platform. Using techniques like Row-Level Security (RLS), engineers ensure that AI models only process and retrieve data explicitly belonging to the authenticated tenant. This strict boundary is a fundamental requirement for SaaS security.

Can LLMs ensure regulatory compliance?

No, Large Language Models are probabilistic engines and cannot inherently guarantee regulatory compliance or data security. Compliance must be enforced by external, deterministic middleware layers that govern data inputs, sanitize patient identifiers, and restrict AI outputs. The architecture around the model, not the model itself, provides the compliance.

What is the role of governance in AI-assisted development?

Governance acts as the programmable rule set that dictates how AI can be safely utilized within the software development lifecycle. It mandates security testing, code reviews, and architectural guardrails to prevent AI from introducing vulnerabilities or drift into the codebase. This ensures all AI-generated logic meets enterprise security standards.

How does human oversight prevent AI risk?

Human oversight mitigates the risk of cognitive offloading, where users blindly trust automated, potentially flawed outputs. By mandating human-in-the-loop workflows, such as requiring clinicians to review and sign off on AI-generated summaries, the architecture ensures clinical accuracy. It maintains the essential layer of human accountability and professional judgment.